FHE

Aug 26, 2025

MHE from RLWE: When MPC Meets Homomorphic Encryption

Discover how Multiparty Homomorphic Encryption (MHE) overcomes the communication overhead and synchronization limits of Secure Multiparty Co...

FHE

Jul 14, 2025

Mathematical Foundations

Ideals

Building New Rings: Quotients

Roots of Unity

Cyclotomic Polynomials: Collecting the Primitives

Homomorphisms: Structure-Preserving Maps

The Chinese Remainder Theorem: A Powerful Decomposition

SIMD Operations in Fully Homomorphic Encryption

Where Our Polynomials Live

The Magic of Cyclotomic Factorization

The Chinese Remainder Breakthrough

Galois Automorphisms: The Key to Data Movement

The Frobenius Map: A Special Automorphism

One-Dimensional Rotations: Making Data Move

Clean Rotations Through Masking

SIMD Operations in BGV with Lattigo

Conclusion

Acknowledgements

Fully Homomorphic Encryption (FHE) promises a revolutionary capability: performing arbitrary computations on encrypted data without ever decrypting it. This breakthrough enables secure cloud computing where sensitive data remains protected even during processing. However, early FHE schemes faced a critical bottleneck. Consider a simple task like adding two encrypted vectors of length 1000. This would require 1000 separate homomorphic additions, each involving expensive operations on large polynomials. For real-world applications processing massive datasets, this element-by-element approach creates prohibitive computational overhead.

Modern FHE schemes like BGV, BFV and CKKS solve this challenge through an elegant mathematical insight: they can pack multiple plaintext values into a single ciphertext and perform operations on all packed values simultaneously.

Instead of encrypting individual numbers, these schemes:

This Single Instruction, Multiple Data (SIMD) approach transforms FHE from a theoretical curiosity into a practical tool. A single homomorphic multiplication can now process an entire vector at once, delivering speedups of several orders of magnitude.

This post explores the mathematical foundations underlying these SIMD operations. We'll see how abstract concepts from algebra and number theory combine to create a powerful framework for parallel homomorphic computation. While the mathematics is sophisticated, understanding these foundations is crucial for implementing efficient FHE applications and pushing the boundaries of what's possible with encrypted computation.

This post assumes you're comfortable with the basics of groups and rings, things like the distributive laws and fundamental properties of rings with identity. If you've worked with elementary group theory, you should be in good shape.

Definition. Let be a ring. A subset is called an ideal when:

That second condition is what makes ideals special, they "absorb" multiplication by any element in the ring. It's like having a mathematical black hole that pulls in anything you multiply with it.

Example. In the integers , consider the set of all multiples of . This forms an ideal because multiplying any multiple of by any integer gives you another multiple of .

A principal ideal is the simplest kind, one generated by a single element. Given , we can create the ideal

This captures the smallest ideal that contains . It's everything you can get by multiplying by elements from the ring.

Given a ring and an ideal , we can construct a brand new ring called the quotient ring .

The elements of are cosets, think of them as equivalence classes for . We define operations on these cosets by:

Examples:

: This gives us the familiar "clock arithmetic" - integers modulo with elements .

Polynomial quotients: Taking and , we get polynomials modulo - essentially polynomial arithmetic where we replace high powers using the relation .

In any ring , an -th root of unity is an element such that . But we're particularly interested in primitive -th roots of unity for all positive integers .

Here's a key insight: is a primitive -th root of unity if and only if .

Let . The -th roots of unity are the distinct powers , so itself is primitive.

The key observation is that if and only if is divisible by . Here's why: write with . Then . But since is primitive, this only happens when .

Now, if , then , so is not primitive.

Conversely, if and , then divides . Since and are coprime, we must have divides . This means the first positive power of that equals is the -th power.

Euler's totient function counts exactly these "good" exponents - the positive integers that are coprime to . So there are precisely primitive -th roots of unity.

Example. In the complex numbers, the primitive -th roots of unity are exactly where .

Euler's totient function counts the positive integers up to that are coprime to :

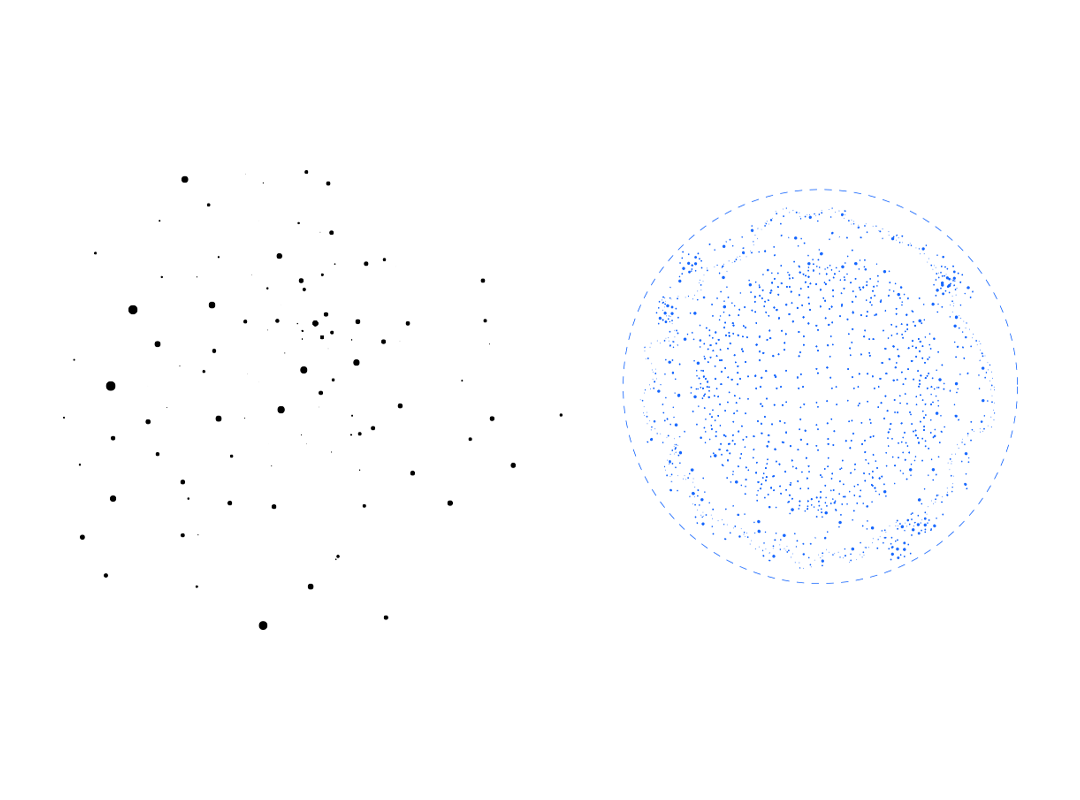

Let's work out a specific case. We'll find the 8th roots of unity in the quadratic extension

In a finite field of size , the multiplicative group has order .

Here, , so . Since divides , we expect all eight 8th roots of unity to live inside .

We write elements of in the form with , where .

Using Sage (or by hand if you're feeling ambitious):

R.<t> = PolynomialRing(GF(3))

F.<x> = GF(3^2, modulus = t^2 + 1)

roots8 = [z for z in F if z^8 == 1]

print(roots8)

This gives us:

All eight solutions to in .

An element is primitive when its order is exactly . Euler's totient tells us , so exactly four of our eight roots should be primitive.

g = F.multiplicative_generator()

primitive = [g^k for k in (1,3,5,7)]

print(primitive)

This reveals:

These are our four primitive 8th roots. The remaining roots have orders 1, 2, or 4.

The -th cyclotomic polynomial is designed to capture exactly the primitive -th roots of unity. Over the rationals:

This polynomial has degree - one factor for each primitive root.

Let's construct

By definition:

This gives us a monic quartic (degree ) that's irreducible over .

A group homomorphism between groups and is a function that respects the group operation:

Two important subsets come with every homomorphism:

Both form subgroups of their respective groups.

Similarly, a ring homomorphism between rings and preserves both operations:

When a homomorphism is bijective, we call it an isomorphism. Isomorphic structures are essentially identical from an algebraic perspective - they have the same "shape."

An automorphism is an isomorphism from a structure to itself. The collection of all automorphisms of a group forms a group under composition, denoted .

Let be a commutative ring with unity, and let be comaximal ideals (meaning whenever ). Define . Then we have an isomorphism:

We'll prove this by induction on the number of ideals.

Base Case (): Let be comaximal ideals with . We want to show .

Consider the natural map:

By the First Isomorphism Theorem, it suffices to show is surjective with kernel .

First Isomorphism Theorem for Rings: If is a surjective ring homomorphism with kernel , then induces a natural ring isomorphism

Kernel verification: We have

Surjectivity: Take any . Since , we can find and with .

Define .

Then and , so .

Inductive Step: Suppose the theorem holds for ideals. For comaximal ideals , observe that are pairwise comaximal.

By the inductive hypothesis:

Applying the base case to and :

Combining these gives the desired result.

Now we get to the exciting part - how all this abstract algebra enables powerful parallel computation in encrypted data.

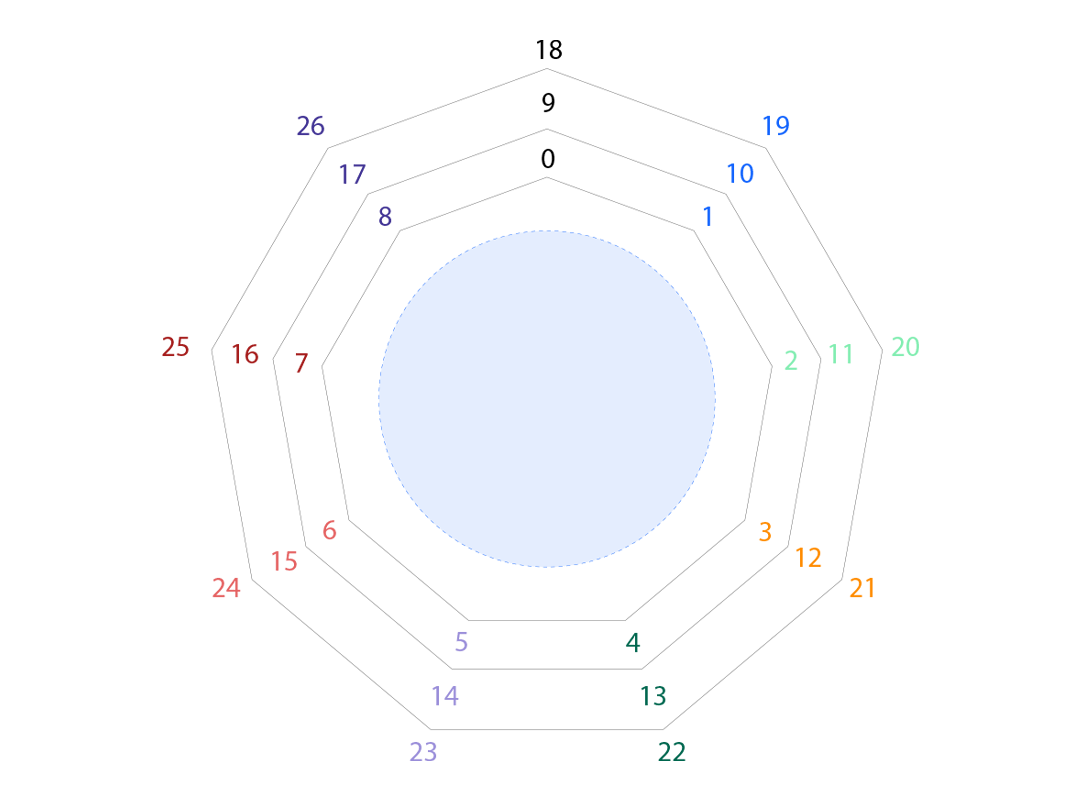

We start with a quotient ring , where is the -th cyclotomic polynomial. This is our fundamental workspace.

In homomorphic encryption, we don't encrypt integers directly. Instead, we work over a prime field that serves as our plaintext space. Reducing all coefficients modulo gives us the plaintext ring:

The overline just reminds us we've done the modular reduction.

Here's where number theory delivers something beautiful. As long as doesn't divide , the cyclotomic polynomial completely factors over into irreducible pieces of equal degree:

Each is irreducible with degree - the multiplicative order of modulo .

Let's pick any irreducible factor, say , and define:

Since is irreducible, becomes a field with elements. Every element in can be written as for some polynomial . Notice that is a root of , making it a primitive -th root of unity.

Why the coset is instantly a root of : Forming the quotient ring

is nothing more than declaring inside the ring that the polynomial now equals zero.

- The projection sends every polynomial to its coset. Write

This is the “image of ’’.- Because is in the ideal we factor by, its coset is :

But is a homomorphism, so . Hence in . By construction, behaves exactly like a root of .

The polynomial has distinct roots in , specifically for each in the unit group . These roots distribute evenly among the irreducible factors, with each factor getting exactly roots.

To understand the distribution, consider the subgroup:

This subgroup has order and contains .

When we form the quotient group , we get distinct cosets:

Choosing representatives from each coset, we can arrange things so each has exactly the roots where .

Now comes the payoff. We can establish isomorphisms for each factor:

Combining this with the fact that

gives us the crucial isomorphism:

This is the key insight: we can perform component-wise operations on vectors in by doing ring operations in !

If we have:

Then:

Converting between the coefficient representation in and the slot representation in is computationally straightforward using the Number Theoretic Transform (NTT).

Let's work through a concrete example with specific parameters:

The 8th cyclotomic polynomial is . Over , this splits as:

R.<X> = PolynomialRing(GF(3))

factor = (X^4 + 1).factor()

print(factor)

The four primitive 8th roots of unity are:

Each irreducible quadratic "owns" exactly two of them:

| Polynomial | Roots in |

|---|---|

The coset structure is determined by:

This gives us the isomorphism:

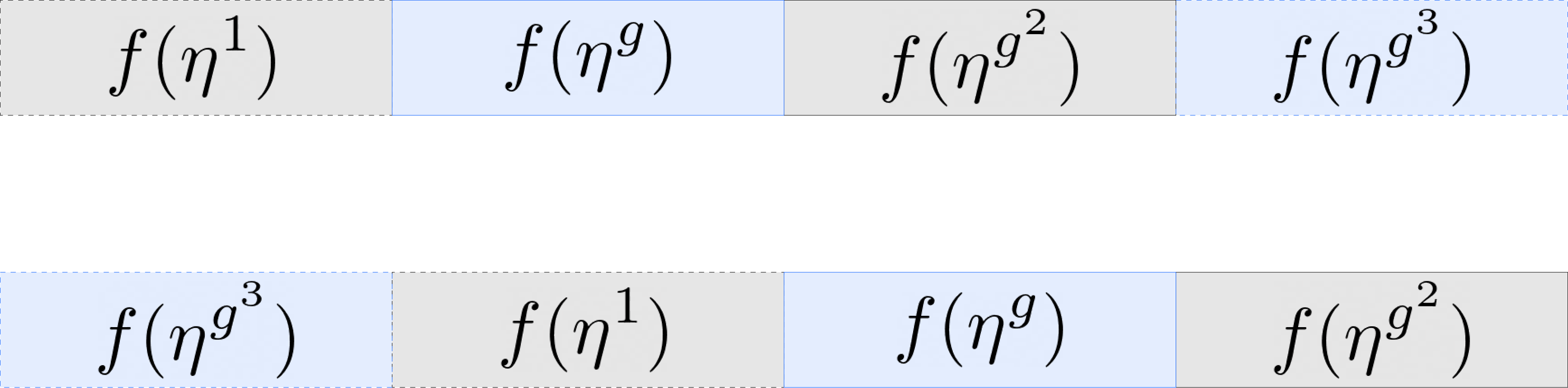

We want to encode the following slot values:

where .

Any polynomial in has a unique representative:

This means we need 4 evaluations of our function to interpolate it using NTT. Because the Frobenius automorphism fixes each component, we have:

Indeed

,

,

,

and , , so is a primitive -th root of unity.

We need evaluated at the odd powers of :

Factor out and absorb it into the coefficients:

Thus

Inverse NTT returns . Remove the twist:

All coefficients are now back in .

Thus:

We can verify that the polynomial obtained is correct by checking the slot values:

Everything matches the given slot values, confirming that the reconstruction is correct.

Let's do the same transformation for the case

First we find the missing evaluations

Then going through the same inverse NTT process we get

We have

Let’s check that homomorphic addition is well-defined:

Finding the missing evaluations:

and running inverse NTT to reconstruct the polynomial

F3 = GF(3)

F9.<x> = GF(9, modulus = x^2 + 1)

R.<X> = PolynomialRing(F3)

slot0, slot1 = (2, 1)

w = x + 1

omega = w^2

omega_inv = omega.inverse()

y0, y2 = F9(slot0), F9(slot1)

y1, y3 = y0^3, y2^3

y = [y0, y1, y2, y3]

b = []

for j in range(4):

s = F9.zero()

for k in range(4):

s += y[k] * (omega_inv)^(j*k)

b.append(s)

a = [F3(b[j] * w^(8 - j)) for j in range(4)]

f = (a[0] + a[1]*X + a[2]*X^2 + a[3]*X^3) % (X^4 + 1)

print(f)

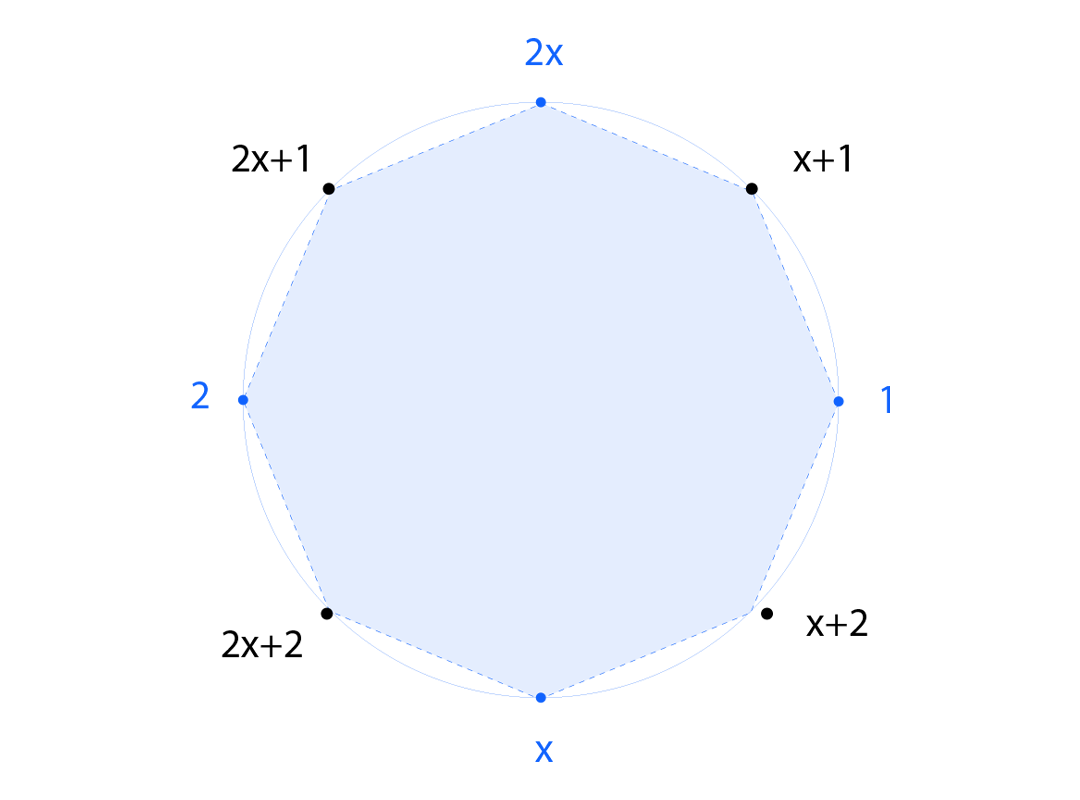

To understand how to move data between slots, we return to our ring , with representing the image of in .

For each , we can define:

This is well-defined because if , then whenever is a primitive -th root of unity, so is . This means that if , then as well, giving us:

These maps have a natural group structure: since in , we have:

This gives us an injective group homomorphism:

These maps are precisely the Galois automorphisms of our cyclotomic extension.

The Frobenius automorphism deserves special attention:

For every , we have . Importantly:

Under our correspondence between and , if we let and apply :

This means acts slot-wise as the Frobenius map on - crucial for understanding rotations.

Consider an element such that forms a complete set of coset representatives for in . This means must lie in .

In the ideal case where , using representatives , our slot isomorphism becomes:

When we apply :

Since the last entry wraps around to , the automorphism rotates the slots one position to the left! More generally, rotates left by positions, while rotates right by positions.

When , things get trickier. If for some :

This gives a rotation, but the last slot gets perturbed by . This isn't a clean rotation unless the first slot contains a value in , where acts trivially.

Even when slots contain values outside , we can achieve perfect rotations using a clever masking technique.

For a rotation by positions, we create masking elements:

For an element :

Combining these gives a perfect left rotation:

This produces an element whose slots are exactly those of rotated places to the left, regardless of whether slot values lie outside .

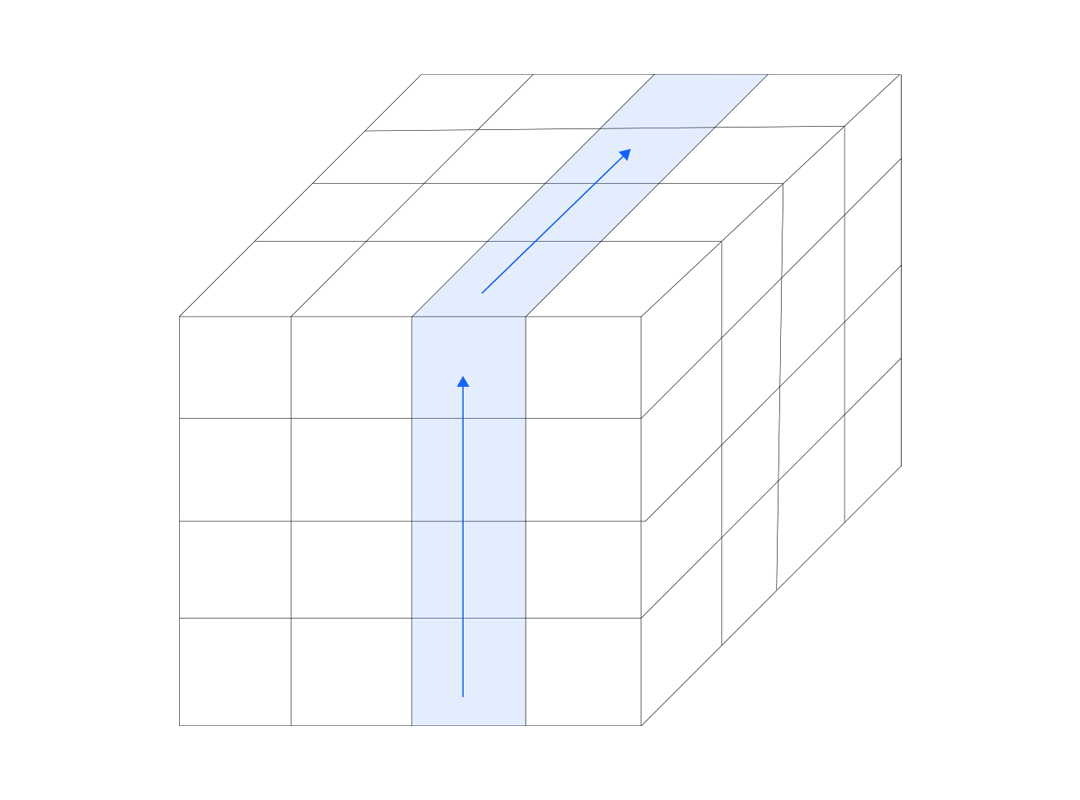

For more complex data arrangements, we can organize our slots as a multi-dimensional hypercube. Let be our slot count factored into positive integers.

We choose generators and arrange the coset representatives as:

This creates an -dimensional hypercube with side-lengths , where every slot has coordinates .

For each dimension , the Galois automorphism shifts every hyper-column forward by one step in the -th coordinate. Applying shifts by positions, while shifts backwards.

For true rotations without Frobenius disturbance, we use masking elements that place ones in the first slots of each hyper-column and zeros elsewhere.

The formula for rotating left by positions along the -th axis is:

This example demonstrates slot-wise homomorphic addition using the Lattigo library’s BGV API. With BGV’s powerful SIMD capabilities, we can pack an entire vector of integers into a single ciphertext, perform encrypted computations on each vector entry in parallel, and then recover the results after decryption.

package main

import (

"fmt"

"log"

"math/rand"

"time"

"github.com/tuneinsight/lattigo/v6/examples"

"github.com/tuneinsight/lattigo/v6/schemes/bgv"

)

func main() {

paramDef := examples.BGVParamsN12QP109

params, err := bgv.NewParametersFromLiteral(paramDef)

if err != nil {

log.Fatalf("params error: %v", err)

}

kgen := bgv.NewKeyGenerator(params)

sk := kgen.GenSecretKeyNew()

pk := kgen.GenPublicKeyNew(sk)

encoder := bgv.NewEncoder(params)

encryptor := bgv.NewEncryptor(params, pk)

decryptor := bgv.NewDecryptor(params, sk)

evaluator := bgv.NewEvaluator(params, nil)

T := params.PlaintextModulus()

slots := params.MaxSlots()

rand.Seed(time.Now().UnixNano())

a := make([]uint64, slots)

b := make([]uint64, slots)

expected := make([]uint64, slots)

for i := 0; i < slots; i++ {

a[i] = uint64(rand.Int63n(int64(T)))

b[i] = uint64(rand.Int63n(int64(T)))

expected[i] = (a[i] + b[i]) % T

}

ptA := bgv.NewPlaintext(params, params.MaxLevel())

ptB := bgv.NewPlaintext(params, params.MaxLevel())

if err := encoder.Encode(a, ptA); err != nil {

log.Fatal(err)

}

if err := encoder.Encode(b, ptB); err != nil {

log.Fatal(err)

}

ctA, err := encryptor.EncryptNew(ptA)

if err != nil { log.Fatal(err) }

ctB, err := encryptor.EncryptNew(ptB)

if err != nil { log.Fatal(err) }

ctC, err := evaluator.AddNew(ctA, ctB)

if err != nil { log.Fatal(err) }

ptRes := decryptor.DecryptNew(ctC)

res := make([]uint64, slots)

if err := encoder.Decode(ptRes, res); err != nil {

log.Fatal(err)

}

fmt.Printf("i\t a\t b\t (a+b mod T)\t result\t match\n")

for i := 0; i < slots; i++ {

fmt.Printf("%d:\t%d\t%d\t%d\t\t%d\t%v\n",

i, a[i], b[i], expected[i], res[i], res[i] == expected[i])

}

}

The mathematical foundations explored in this post reveal how abstract algebra transforms fully homomorphic encryption from a theoretical curiosity into a practical tool for secure computation. The key insights we've covered include:

Algebraic Structure: The plaintext ring naturally decomposes into a product of extension fields through cyclotomic factorization. This decomposition is what makes slot-based packing possible.

Galois Automorphisms: The maps provide the mechanism for data movement between slots. These automorphisms preserve the ring structure while permuting slot contents according to the multiplicative group .

Rotation Techniques: Clean rotations require careful handling of the Frobenius map through masking operations. The hypercube organization extends this to multi-dimensional data arrangements, enabling sophisticated data movement patterns.

Practical Impact: These mathematical tools deliver speedups of several orders of magnitude compared to element-wise processing. A single homomorphic multiplication can now process an entire vector, making FHE viable for real-world applications in secure cloud computing, privacy-preserving machine learning, and confidential data analytics.

This post builds upon decades of research in both algebraic number theory and cryptography. Particular acknowledgment goes to Nigel Smart and Frederik Vercauteren for their foundational paper "Fully Homomorphic SIMD Operations", which established the theoretical framework for slot-based parallel computation in FHE schemes. Their work demonstrated how cyclotomic polynomial arithmetic could be leveraged to achieve true vectorization in homomorphic encryption.

The practical implementation insights draw heavily from Shai Halevi and Victor Shoup's comprehensive work "Design and implementation of HElib: a homomorphic encryption library", which translated these theoretical advances into efficient, usable software.