Security

Dec 3, 2025

Attacks on Threshold Schemes: Part 1

A technical guide to real-world attacks on threshold signature schemes. Explore implementation bugs like missing checks and proofs, wrong pa...

Security

Dec 16, 2025

In Part 1, we covered implementation bugs - the missing checks, skipped validations, and encoding ambiguities that turn provably secure protocols into exploitable systems. Those were failures at the code level: developers trusting inputs they should validate, using insufficient security parameters, or misunderstanding the cryptographic requirements. This part goes deeper. Here we examine attacks that stem from the protocols themselves, design decisions that look sound in the paper but break under adversarial conditions the original authors didn't fully consider. We'll also look at a recent theoretical result that challenges the adaptive security of widely-deployed threshold Schnorr schemes. These aren't bugs you can fix with a parameter check or an extra validation. They're fundamental issues that require protocol redesign or careful understanding of when a protocol's security guarantees actually hold.

The Multiplicative-to-Additive (MtA) share conversion is everywhere in threshold ECDSA. This protocol uses Paillier encryption. Party Alice holds secret and a Paillier keypair with modulus . Party Bob holds secret . They proceed:

Alice encrypts: Computes along with a zero-knowledge range proof that is in the correct range and sends to Bob.

Bob responds:

Alice decrypts: Verifies Bob's proof then computes (her output share)

The range proofs ensure that and are small enough that the decryption doesn't overflow. Specifically, (computed over the integers), and then . If and are in range, doesn't wrap around modulo , and we get as desired.

The "Fast" Version

The GG18 paper proposed a variant (MtAwc) that omits the range proofs. Instead are published and the authors conjectured that the public values and "do not leak any additional data and compensate for the missing range proof." They were wrong.

The Attack

The attacker (say, Alice/Party 1) chooses . This is much larger than the protocol expects, should be roughly -sized, but this is about .

Bob proceeds honestly: computes and sends .

Alice decrypts: . Let's denote the original value over the integers (without modular reduction)

Now, , so . The key observation: depending on the size of , it will fall into one of three disjoint ranges:

Alice computes (using the public values and ) and checks which case holds. This reveals which interval belongs to, which in turn reveals information about :

Alice just learned a constraint on Bob's secret from a single MtA execution.

Generalizing the Attack

Alice can choose for some integer . Now will fall into one of intervals for . Each signature reveals which interval, giving Alice a constraint:

After just a few signatures (each with a different malicious value), Alice reconstructs Bob's entire secret key.

Why It Happens

The "additional data" and was supposed to prevent leakage. But when Alice chooses out-of-range , the decryption creates a side channel: the relationship between and reveals how much modular reduction occurred, which leaks information about .

This is the Attack on absent range proofs from "Alpha-Rays: Key Extraction Attacks on Threshold ECDSA Implementations". The paper reports impact on ZenGo and ING libraries.

The Fix: Use the "slow" version with range proofs. Modern protocols like CGGMP20 include proper range proofs for all MtA inputs. Don't skip them.

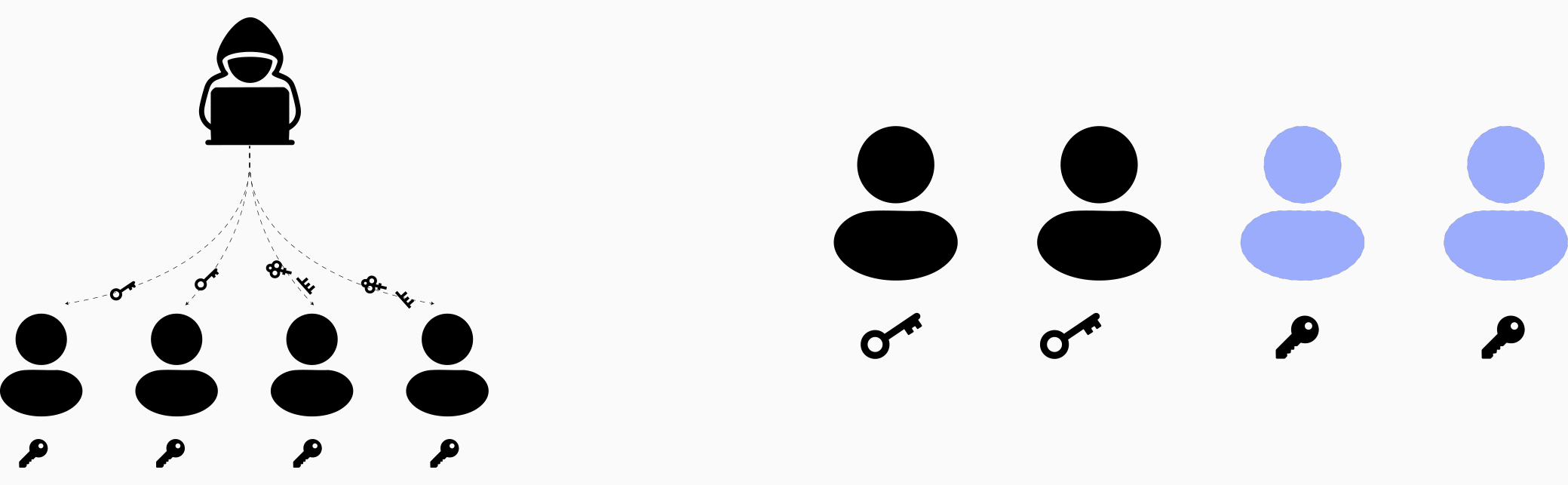

Key resharing protocols are supposed to refresh shares without changing the underlying secret. This maintains continuous access to funds while limiting the damage from gradual key compromise. But if the protocol lacks proper coordination around when to delete old shares, an attacker can weaponize it into a permanent wallet lockout.

The Reshare Protocol

The vulnerable implementation uses VSS-based resharing in a threshold setting. The high-level flow:

The Attack

The adversary (one of the old parties) exploits step 4 by sending selectively malformed shares:

At step 5, the verification outcome splits the new parties into two groups:

Now here's the problem: there's no synchronization before deletion. Parties in Group A don't know that Group B aborted. They delete their old shares and switch to the new ones.

The Consequence: The secret can no longer be reconstructed: mixing old and new shares doesn't work, they're not additive shares of the same secret anymore. Even if all parties cooperate, they can't generate a valid signature. The wallet is permanently locked.

This is the Forget-and-Forgive attack described in "Attacking Threshold Wallets".

The Fix Add a blame phase before deletion. After verification (step 5), parties must coordinate:

Nonce reuse in digital signatures is a classic footgun. Everyone knows the story: reuse your nonce in ECDSA or Schnorr, and your private key leaks. The standard defenses are either (1) sample a fresh random nonce for every signature, or (2) derive the nonce deterministically as .

Option 2 seems perfect for single-party signing, it's deterministic, so you can't accidentally reuse randomness, and it binds the nonce to both the message and the secret key. But in threshold signatures, determinism becomes a weapon.

Why Nonce Reuse Breaks Schnorr

Quick reminder of why nonce reuse is fatal. Suppose you sign two different messages with the same nonce :

where and . Subtract:

Game over. The attacker recovers your secret key from two signatures.

The Threshold Setting

Now consider two-party Schnorr (a toy model, but the idea generalizes). Alice and Bob each hold shares of the secret key, with public key . To sign message :

They exchange public nonces and . The aggregate public nonce is . 2. Partial signatures: Each computes the challenge and produces:

So far, so good. Each party's nonce depends on their own secret key and the message, so it looks safe.

The Attack

Here's the problem: Alice (the attacker) controls her own nonce. She can choose to use a different nonce on the second signing request, even if the message is the same.

First signature (on message ):

Second signature (on the same message ):

Alice now has two partial signatures from Bob:

Bob reused , but with different challenges. Subtract:

Alice extracts Bob's secret share. With both (her own) and (stolen), she can now sign arbitrary messages unilaterally.

This scenario was also covered in one of Jake Craige's articles.

Most threshold signature schemes are proven secure under static corruption: the adversary chooses which parties to corrupt before the protocol starts. But what about adaptive corruption, where the adversary can corrupt parties at any time, even after seeing public keys, commitments, and partial signatures?

Adaptive security is harder to achieve and harder to prove. A recent paper asks: are widely-deployed threshold Schnorr schemes actually adaptively secure? The answer might be no, if a certain computational problem turns out to be easier than we think.

The Setup

Consider a threshold Schnorr scheme where:

where are public evaluation points and are the secret shares. 3. Signatures are Schnorr-compatible: verification checks where .

This describes FROST and most modern threshold Schnorr variants.

The Problem

The authors propose a computational problem. If this problem is solvable in polynomial time, adaptive security collapses for the above schemes.

Subset-Span Problem: Given a target vector and vectors , find a subset with such that (if one exists).

In other words: can you find a small subset of vectors whose span contains the target?

The Attack

Suppose the adversary wants to forge a signature on a fresh message . They need to produce such that where . This requires knowing , the discrete log of the verification equation's right-hand side.

Here's the plan:

Step 1: Choose carefully

Let be the commitments to the polynomial coefficients (note: given , you can compute via change of basis). Set:

for random . Since , we have:

where depends on our choice of (and can be computed).

Step 2: Express as a linear combination of public keys

Define vectors:

Note that:

Now, if we can find coefficients and a subset with such that:

then:

Taking discrete logs:

Step 3: Adaptive corruption

The attacker now adaptively corrupts the parties in , learning their secret shares for . Since (below the threshold), this is within the corruption budget. They compute:

and output the forged signature . Verification passes by construction.

Why This Might Work

For any fixed subset of size , the probability that a random target vector lies in the span of is roughly (very small). But there are possible subsets, exponentially many. If is large enough , the union bound suggests that some subset will work with non-negligible probability.

The question is: can we find that subset efficiently? If the problem is solvable in polynomial time, then adaptive security breaks.

The above idea is described in detail in the paper "A Plausible Attack on the Adaptive Security of Threshold Schnorr Signatures".

Implementation bugs are fixable with better testing, stricter validation, and careful reading of security proofs. Protocol issues require understanding what security properties you actually have versus what you think you have. The MtA oracle attack works because the protocol's security argument relied on range proofs that were omitted. The reshare attack works because the protocol lacked synchronization primitives. The adaptive security question for threshold Schnorr shows that even well-analyzed protocols might not hold up under stronger adversarial models.

Threshold schemes remain one of our best tools for distributed trust, but the gap between theory and practice is real and multifaceted. Make sure your implementation and your threat model match what the security proofs actually guarantee.